Publications

Research and Analysis Publications

We have hard copies of many of our research and analysis publications. Inquiries are welcome.

National Security Analysis Department

Nuclear Forces for 2050 → Dennis Evans, William Kahle, and Jonathan Schwalbe

This report provides an overview of the US nuclear programs of record. There have been many adverse changes in the world security environment in the last few years. All the US nuclear programs of record originated before these adverse developments occurred, however, and there have been no new programs or increases in procurement objectives in response to undesirable external factors. Consequently, this report addresses questions pertaining to the nuclear forces that the United States might need in the 2040s and beyond after accounting for the probable future security environment. These forces include intercontinental ballistic missiles (ICBMs), submarine-launched ballistic missiles (SLBMs), ballistic missile submarines (SSBNs), bombers, cruise missiles for bombers (namely the Long-Range Standoff, or LRSO, cruise missile), nonstrategic nuclear weapons (NSNWs), and the nuclear-warhead infrastructure. This report identifies potentially valuable enhancements the US Department of War should consider in its Nuclear Posture Review and Program-Budget Review for the Fiscal Years 2028–2032 Future Years Defense Plan. It also identifies potential future study topics. The overarching US goals for strategic programs should be to maintain central deterrence, extended deterrence, and strategic stability. The programs of record should enable these goals. This alignment, however, might benefit from (or even require) unfunded upgrades. For example, it could require new programs, improvements in the ratio of lethality to collateral damage, increases to procurement objectives, measures to improve the prelaunch survivability of US nuclear forces, and upgrades to the capability and capacity of the National Nuclear Security Administration (NNSA) in order to guarantee an adequate inventory of nuclear warheads in 2050 and beyond.

National Security Analysis Department

Surfing the Wave: Resilience Strategies for the Decentralizing Grid → Paul Stockton

National security has played a miniscule role in driving the deployment of millions of solar power installations, along with batteries and advanced control systems. Yet, by leveraging the nationwide deployment of these assets, and accelerating construction of natural-gas-fired generators, nuclear plants, and other resources, the United States can sharply increase the number of targets that China must disrupt to create catastrophic blackouts and—potentially—transition to an inherently more survivable grid. Decentralization is no panacea, however. Chinese-manufactured batteries and other distributed energy resources riddle the US electric system. Power electronics that control these resources also create new cyberattack surfaces. Moreover, with electric vehicles and other controllable sources of demand, China can manipulate power consumption to magnify the impact of supply-side attacks. All three threat vectors could create simultaneous grid disruptions across the nation, rendering decentralization useless. Utilities, regulators, and their product vendors are taking steps to meet these challenges. Especially valuable, US manufacturers are selling products to electric distribution companies and other customers that meet increasingly stringent cybersecurity guidelines. But state regulators have barely begun requiring compliance with them. Building on existing voluntary guidelines, the Department of Energy, industry, and regulators should establish security requirements to counter catastrophic threat vectors and transform grid decentralization into a bulwark of national security.

National Security Analysis Department

Estimating Foreign Military Investment → Rodney Yerger, Mark Hodgins, and Jessica Ma

Understanding adversaries’ military investment is critical to understanding their decision-making and to formulating sound US national defense strategies and policies. This understanding is complicated, however, by adversaries’ lack of transparency about and reporting of their defense spending. The Johns Hopkins Applied Physics Laboratory (APL) developed an updated methodology to estimate adversary, or red, defense spending—in particular China’s spending. By better accounting for factors such as central government control of the economy and of parts of industry and academia, APL’s approach results in a significantly higher cost estimate than other prominent methodologies. The APL estimate for China’s total military budget is approximately $500 billion, nearly 100 percent greater than estimates in widely cited sources. Moreover, the APL estimate equates to roughly 66 percent of the US military budget. Although significant uncertainty surrounds the estimate, a series of cross-checks suggests that the estimate is reasonable and, in some cases, may actually be conservative. These findings are consistent with China’s rapid expansion of military capability and suggest that the United States and China may be on more equal footing in terms of military defense spending than other estimates suggest.

National Security Analysis Department

Wizard Warfare: Ukrainian Technological Developments Overview → Stephen DeCoste and Jeff Miers

This document provides an overview of the technological innovations in Ukraine developed as a consequence of the Russian invasion in February 2022. The team focused on the first year of the war to examine what new technologies and technological strategies Ukraine used to fight the Russian invasion. What insights can the United States gain in its efforts to innovate from Ukraine?

National Security Analysis Department

Balancing Act: Assessing Risks and Governance of Climate Intervention → Michael Porambo, Stephanie Tolbert, Lauren Ice, Fred Rosa Jr., Victoria Wu, and Amanda Emmert

Nations, organizations, and individuals may soon look to climate intervention, also known as geoengineering, as a means to avoid the most severe effects of climate change. Despite the hope that climate intervention may prevent the increasingly dire climate change projections from becoming reality, the efficacy of many climate intervention methods remains uncertain. Moreover, many methods pose their own risks to the environment, global ecosystems, and critical human systems. These uncertainties and risks, when combined with the relatively few barriers to unilateral deployment for many methods, drive the need for national and international regulation of climate intervention research, testing, development, and deployment. This report summarizes the effects of two controversial climate intervention methods—stratospheric aerosol injection and ocean iron fertilization—on national security, considering their abilities to both stop and reverse the effects of climate change and the possible direct, unintended environmental changes. It then examines governance principles for climate intervention from a combined national security and technical perspective, deriving principles for addressing climate intervention research, governance, and possible use and making recommendations for the path forward.

National Security Analysis Department

Whatever Happened to Nuclear Winter? → James Scouras, Lauren Ice, and Megan Proper

In 1983, Turco, Toon, Ackerman, Pollack, and Sagan (TTAPS) published “Nuclear Winter: Global Consequences of Multiple Nuclear Explosions” in Science magazine, launching a fierce debate among scientists and policymakers for the remainder of the decade. Through modeling various nuclear exchange scenarios between the Soviet Union and the United States, TTAPS concluded that the sooty smoke produced from fires and lofted into the stratosphere could dramatically reduce the average temperature over large portions of Earth’s surface for months, thereby plunging the world into a “nuclear winter.” After the intense attention in the 1980s, the nuclear winter debate and scientific research largely died down after the end of the Cold War. Scientific study was rekindled and refocused in the 2000s amid concerns about the nuclear risk associated with the growing arsenals of India and Pakistan. However, despite the significant climatic consequences predicted by these regional nuclear exchange studies, based on publicly available information, government interest in nuclear winter has remained low. We undertook a comprehensive literature review to (1) understand the evolution and current state of nuclear winter research and policy analysis; (2) understand the apparent loss of government interest since the end of the Cold War; and (3) assess alternative future courses of action. We find that while nuclear winter is potentially the most severe consequence of nuclear war, the science is still fraught with uncertainties that have undermined its acceptance. The initial widespread interest waned because of a combination of factors, principally the end of the Cold War but also the impracticality of policy solutions and the problematic mixture of science and politics. We recommend renewed consideration of nuclear winter in policy formulation and a sustained research program to reduce uncertainties.

National Security Analysis Department

Constructing an Elicitation on the Risks of Weapons of Mass Destruction: Lessons From Analyzing the Lugar Survey Jane Booker, Lori Baxter, and James Scouras

In 2005, Senator Richard Lugar polled 85 experts to quantify their weapons of mass destruction risk perceptions and identify points of convergence and divergence, with the overarching goal of drawing increased attention to the need for greater nonproliferation efforts. The results of this survey are presented in The Lugar Survey on Proliferation Threats and Responses, and the contents of that report are the focus of our analysis efforts. We also examine nearly two decades of citations of this report from 2005 to mid-2023. An online appendix summarizes our literature search.

Senator Lugar was clear in cautioning that the survey was a political effort, rather than a “scientific” survey. And it has been successful in its goal of encouraging dialogue on proliferation threats. We find, however, that most documents citing the Lugar survey have ignored Senator Lugar’s caution by taking its results at face value—in other words, without appropriate caveats—thereby lending greater credibility to it than is warranted. Our examination of the Lugar survey explains the basis for Senator Lugar’s caution, focusing on survey methodology and implementation, as well as the analysis and presentation of results. We offer numerous suggestions for any future survey that aspires to utilize best elicitation and analysis practices.

Read the appendix (authored by Lori Baxter, Jane Booker, and James Scouras).

National Security Analysis Department

Nuclear Winter, Nuclear Strategy, Nuclear Risk → James Scouras, Lauren Ice, and Megan Proper

In this essay, we strive to explain the long-standing practice of intentionally ignoring the potential for nuclear winter in the formulation of US nuclear strategy. To do so, we explore the critical relationships between (1) nuclear winter and (2) nuclear strategy and nuclear risk. We consider the multiple roles of nuclear weapons and how perspectives on nuclear winter affect these roles. We distinguish cases in which neither, only one, or both sides in an adversarial relationship believe nuclear winter would be cataclysmic. Our analysis reveals two primary reasons for ignoring nuclear winter in US nuclear strategy. First, any single nuclear state can only do so much by itself to reduce nuclear winter’s consequences. The second, largely unspoken, reason is that the side believed to be more concerned about the risk of nuclear winter may be at a disadvantage in nuclear crisis management, deterrence, and warfighting. Nevertheless, we argue that prudence dictates we revisit current nuclear strategy. As the risk of nuclear war grows, it is increasingly apparent that we can no longer completely rely on the continued success of deterrence. We must also hedge against its possible failure. The risk of catastrophic nuclear winter must be weighed against the potentially detrimental effects that acknowledging and ameliorating its consequences could have on nuclear strategy.

National Security Analysis Department

Without Precedent: Global Emerging Trends in Nuclear and Hypersonic Weapons Brian Ellison, Dennis Evans, Matthew Lytwyn, and Jonathan Schwalbe

Russian, Chinese, and North Korean developments suggest a fundamental, adverse change in the world security environment. This is evident in the increased numbers of strategic weapons and delivery systems, the diversity of options, each country’s approach to nuclear posture, and the alert status of each country’s weapons. Unlike in the Cold War, the United States could be faced with needing to deter two or more major adversaries at a time, but with fewer options and a decreased number of overall weapons.

First published in Journal of Policy & Strategy, Volume 3, Number 1, a publication of the National Institute for Public Policy.

National Security Analysis Department

Advanced Estimating Methodologies for Conceptual-Stage Development → Chuck Alexander

This report presents statistical techniques and cost analysis that significantly enhance legacy technology development estimating methodologies. The techniques leverage independent variables that reflect a comprehensive set of cost drivers relating to technology scale, complexity, type, maturity, and development difficulty. The highly tailored solutions including uncertainty vastly expand and refine earlier development estimating models. A general research and development framework relating key milestones, technology readiness levels, and cost benchmarks is constructed and woven into an integrated solution.

National Security Space Mission Area

Cislunar Security National Technical Vision → Edited by Steve Parr and Emma Rainey

This document describes current national needs in cislunar strategy, policy, and technology, with a focus on technology to enable space situational awareness; reconstitution; position, navigation, and timing; and communications missions. It defines current technology needs and challenges for cislunar operations, identifies critical technology gaps, and provides recommendations for near- and long-term technology development at the national level to execute these missions. Technology areas that are specific to a particular mission are discussed in the section for that mission type.

National Security Analysis Department

Wartime Fatalities in the Nuclear Era Lauren Ice, James Scouras, and Edward Toton

Senior leaders in the US Department of War, as well as nuclear strategists and academics, have argued that the advent of nuclear weapons is associated with a dramatic decrease in wartime fatalities. This assessment is often supported by an evolving series of figures that show a marked drop in wartime fatalities as a percentage of world population after 1945 to levels well below those of the prior centuries. The goal of this report is not to ascertain whether nuclear weapons are associated with or have led to a decrease in wartime fatalities, but rather to critique the supporting statistical evidence. We assess these wartime fatality figures and find that they are both irreproducible and misleading. We perform a more rigorous and traceable analysis and discover that post-1945 wartime fatalities as a percentage of world population are consistent with those of many other historical periods.

First published in Statistics and Public Policy, Volume 9, Issue 1.

National Security Analysis Department

Strategic Arms Control Beyond New START: Lessons from Prior Treaties and Recent Developments → Dennis Evans

The United States has been a party to numerous treaties on nuclear weapons, dating back to the 1960s. These treaties fall into two general categories: treaties that constrain activities (e.g., nuclear testing, placing nuclear weapons in outer space, and nuclear proliferation) and treaties that constrain the number and nature of weapons that the parties can possess. All nine treaties limiting the size and nature of nuclear arsenals (including one treaty that limited missile defense) have been bilateral agreements between the United States and Russia (or the Soviet Union before 1992). During negotiations for strategic arms-control agreements, the key US objectives have been to sustain stable strategic nuclear deterrence and to reduce unnecessary and costly arms races. This report describes all nine of these treaties, with particular focus on the New Strategic Arms Control Treaty (New START)—the only such treaty that is still in effect. Further, this study analyzes how well these treaties kept up with emerging technology and the security environment of their times, and how well they met the goals just listed. This report then draws lessons from earlier treaties and developments of the last decade to provide considerations for the United States to account for when negotiating whatever treaty follows New START. Finally, many earlier arms-control treaties between the United States and Russia took from two and a half to seven years to negotiate, exclusive of preparatory work to initiate negotiations. The expiration date for New START is February 2026, so the time to begin thinking about arms control beyond New START is now.

National Security Analysis Department

On Assessing the Risk of Nuclear War → Edited by James Scouras

While careful analysis of the likelihood and consequences of the failure of nuclear deterrence is not usually undertaken in formulating national security strategy, general perception of the risk of nuclear war has a strong influence on the broad directions of national policy. For example, arguments for both national missile defenses and deep reductions in nuclear forces depend in no small part on judgments that deterrence is unreliable. However, such judgments are usually based on intuition, rather than on a synthesis of the most appropriate analytic methods that can be brought to bear. This work attempts to establish a methodological basis for more rigorously addressing the question: What is the risk of nuclear war? Our goals are to clarify the extent to which this is a researchable question and to explore promising analytic approaches. We focus on four complementary approaches to likelihood assessment: historical case study, elicitation of expert knowledge, probabilistic risk assessment, and the application of complex systems theory. We also evaluate the state of knowledge for assessing both the physical and intangible consequences of nuclear weapons use. Finally, we address the challenge of integrating knowledge derived from such disparate approaches.

National Security Analysis Department

Operational Analysis for Coronavirus Testing Marc Mangel and Alan Brown

Testing will remain a key tool for those managing health care and making health policy for the current coronavirus pandemic, and testing will probably be an important tool in future pandemics. Because of test errors, the observed fraction of positive tests, the surface positivity, is generally different from the underlying incidence rate of the disease. We model, using both analytical and simulation tools, the process of testing to address (1) how to go from positivity to a point estimate incidence rate; (2) how to compute a reasonable range of possible incidence rates, given the models and data; (3) how to compare different levels of positivity in light of test errors, particularly false negatives; and (4) how to compute the risk (defined as including one infected individual) of groups of different sizes, given the estimate of incidence rate. Our approach is based on modeling the process generating test data in which the true state of the world (incidence rate, probability of a false negative test, and probability of a false positive test) is known. This allows us to compare analytical predictions with a known situation, thus providing confidence when the tools are used when the true state of the world is not known.

National Security Analysis Department

Defeating Coercive Information Operations in Future Crises → Paul Stockton

The US government and its social media partners are bolstering their defenses against foreign election interference and campaigns to corrode democratic governance. Those efforts are vital but inadequate for the emerging security environment. The United States should also account for the risk that in intense regional crises, adversaries will use information operations (IOs) to coerce US and allied behavior. In particular, opponents will seek to convince US and allied policymakers that unless they back down, their nations will suffer punishment that dwarfs any gains they hope to achieve. If adversaries cannot prevail through IOs alone, they may fulfill their threats and launch increasingly destructive cyberattacks, paired with warnings that further punishment will follow until the US and its allies capitulate.

The US military is rapidly improving its ability to conduct coercive operations against US opponents. Yet, the federal government has barely begun to develop strategies and capabilities to defeat equivalent campaigns against us. This study examines the vulnerabilities of the US public and policymaking process to coercive IOs and analyzes Chinese and Russian technologies to exploit these vulnerabilities with unprecedented effectiveness. The study also proposes options to defeat (and, ideally, help deter) future coercive campaigns, in ways that uphold the Constitution and leverage progress already underway against electoral interference and the corrosion of democratic governance.

National Security Analysis Department

Ready for the Next Storm: AI-Enabled Situational Awareness in Disaster Response → Jen Dailey Lambert, Michelle Rose, Jeremy Ratcliff, Megan O’Connor, Tara Kedia, Sophia Oluic, Jeff Freeman, and Kaitlin Lovett

Situational awareness during disaster response is critical as it enables the response community to rapidly and efficiently assist those in urgent need during the time-sensitive, acute phase of a disaster. New technologies can drastically improve the effectiveness of response operations: satellite imagery to quickly map the destructive path of a hurricane, social media tracking to identify communities of increased need, and computer modeling to predict the route of a wildfire to inform evacuations. The US government has prioritized implementation of artificial intelligence (AI) systems throughout the federal agencies, including those technologies that may assist in disaster response. In this report, we contribute a technological road map for delivering to the response community near- and more distant-future AI-enabled technologies that could aid in SA during disasters. By exploring current and historical technology trends, successes, and difficulties, we envision the benefits and vulnerabilities that such new technologies could bring to disaster response. Given the complexities associated with both disasters and AI-enabled technologies, an integrated approach to development will be necessary to ensure that new technologies are both science driven and operationally feasible.

National Security Analysis Department

National Cyber Defense Center → James Miller and Robert Butler

The United States should establish a National Cyber Defense Center (NCDC) in the Office of the National Cyber Director to proactively address cyber threats to US interests. The NCDC would plan and coordinate US governmental efforts across four areas: cyber deterrence, active cyber defense, offensive cyber operations in support of defense, and incident management. It would also work closely with the private sector, state and local governments, and US allies. Such a proactive and comprehensive approach is needed to deal with cyber adversaries who are exploiting seams within the US government and between the US government and the private sector.

National Security Analysis Department

Tickling the Sleeping Dragon's Tail: Should We Resume Nuclear Testing? → Michael Frankel, James Scouras, and George Ullrich

This report addresses the questions of whether the United States should resume nuclear testing and, if not, whether it should better prepare to do so in the future. Our goal is to provide a comprehensive and balanced consideration of all significant arguments that inform these questions. To place these arguments in the proper context, we briefly recount US nuclear testing history, describing alternative objectives for nuclear tests and providing a taxonomical retrospective of significant surprises encountered—in the nuclear environment, in vulnerabilities of military systems, and in weapon performance and safety. We review as well the critical role played by Science-Based Stockpile Stewardship in lieu of testing and the concerns of its critics. We also describe the current state of nuclear test readiness and assess whether the United States can presently meet its readiness obligations. After considering all significant technical and policy arguments and counterarguments, both for and against test resumption, we conclude that under present circumstances, the United States should not resume nuclear testing because of the lack of a compelling national security need combined with potentially significant negative geopolitical consequences for nuclear proliferation and reignition of a nuclear arms race. However, we identify a series of future technical and political developments whose occurrence would require revisiting our decision calculus. We end the report with recommendations to improve test readiness and, as a final thought, place the issue of whether or not to resume nuclear testing in the context of conflicting far- and near-term US national security goals.

National Security Analysis Department

Operational Analysis for Coronavirus Testing → Alan Brown and Marc Mangel

Even though vaccines for coronavirus are increasingly available, it will be many months before sufficient herd immunity is achieved. Thus, testing remains a key tool for those managing health care and making policy decisions. Test errors, both false positive tests and false negative tests, mean that the surface positivity (the observed fraction of tests that are positive) does not accurately represent the incidence rate (the unobserved fraction of individuals infected with coronavirus). In this report, directed to individuals tasked with providing analytical advice to policymakers, we describe a method for translating from the surface positivity to a point estimate for the incidence rate, then to an appropriate range of values for the incidence rate, and finally to the risk (defined as the probability of including one infected individual) associated with groups of different sizes. The method is summarized in four equations that can be implemented in a spreadsheet or using a handheld calculator. We discuss limitations of the method and provide an appendix describing the underlying mathematical models.

National Security Analysis Department

The Russian Invasion of the Crimean Peninsula, 2014-2015: A Post-Cold War Nuclear Crisis Case Study → Jonathon Cosgrove

As part of an overall examination of nuclear weapons in post–Cold War crises, this case study examines the role of nuclear weapons in tensions between the United States and Russia during the invasion of Ukraine’s Crimean Peninsula. The initial success of Russian efforts to coerce Ukraine away from association with the European Union (EU) triggered a popular protest movement that led to the removal of Ukrainian president Viktor Yanukovych from office. Faced with a new, pro-Western government in Kiev, Russia immediately moved to invade the Crimean Peninsula. As international tensions rose, both the United States and the EU sought to maintain Ukraine’s territorial integrity through diplomatic and economic means. However, Russia did not accept US nonintervention as a given and sought to deter action to reverse the invasion by brandishing its nonstrategic nuclear arsenal through nuclear messaging, allusions to nuclear first-use policies, drawing of nuclear red lines, and the maneuver of dual-use platforms onto the occupied Crimean Peninsula. By examining the roles that nuclear weapons and their characteristics played throughout the crisis, this case study points to potentially important variables for consideration in future academic studies, and sounds the warning for policy makers on how Russia might leverage its nonstrategic nuclear arsenal in future confrontations.

National Security Analysis Department

“Measure Twice, Cut Once: Assessing Some China–US Technology Connections” Series →

The Johns Hopkins University Applied Physics Laboratory commissioned “Measure Twice, Cut Once: Assessing Some China–US Technology Connections,” a series of papers from experts in specific technology areas to explore the advisability and potential consequences of decoupling.

In each of these areas, the authors have explored the feasibility and desirability of increased technological separation and offered their thoughts on a possible path forward. The authors all recognize the real risks presented by aggressive, and frequently illegal, Chinese attempts to achieve superiority in critical technologies. However, the project also represents a reality check regarding the feasibility and potential downsides of broadly severing technology ties with China.

The project was led by former Secretary of the Navy Richard Danzig, initially in partnership with Avril Haines, former Deputy National Security Advisor. This compilation of papers was authored by experts from across the nation, and the views of the authors are their own.

National Security Analysis Department

South China Sea Military Capabilities Series → J. Michael Dahm

In the information age, the Chinese People’s Liberation Army (PLA) believe that success in combat will be realized by winning a struggle for information superiority in the operational battlespace. China’s informationized warfare strategy and information-centric operational concepts are central to how the PLA will generate combat power. These South China Sea (SCS) military capability (MILCAP) studies provide a survey of military technologies and systems on Chinese-claimed island-reefs in the disputed Spratly Islands. The relative compactness of China’s SCS outposts makes them an attractive case study of PLA military capabilities. Each island-reef and its associated military base facilities may be captured in a single commercial satellite image. An examination of capabilities on China’s island-reefs reveals the PLA’s informationized warfare strategy and the military’s designs on generating what the Chinese call “information power.” The SCS MILCAP series is organized around different categories of information power capabilities, from reconnaissance to communications to hardened infrastructure. Kinetic effects will remain an important component of PLA operational design. However, any challenger to Chinese military capabilities in the SCS must first account for and target the very core of the PLA’s informationized warfare strategy—its information power.

National Security Analysis Department

Responding to North Korean Nuclear First Use: So Many Imperatives, So Little Time → Erin Hahn and James Scouras

What if North Korea were to actually use one or more nuclear weapons? How should the United States respond? The singularly important US prewar objective is to deter nuclear war, but once nuclear weapons have been unleashed, this objective will immediately become moot. US post-nuclear-attack imperatives will likely include (1) physically preventing further use of nuclear weapons by North Korea; (2) cognitively dissuading further North Korean nuclear use; (3) convincing other adversaries that nuclear use is a horrendous idea; (4) allaying allies’ concerns about extended deterrence; (5) satisfying domestic political demands; (6) conforming to international law; and (7) last, and quite possibly least, restoring the nuclear taboo. We address each of these imperatives in turn. Our goal is not to determine the “correct” response to North Korean nuclear first use but rather to identify the principal considerations involved in each of these imperatives. Fulfilling all these diverse imperatives in any particular scenario is highly improbable, so we also briefly address the relative priorities among several of them. We conclude with a discussion of the roles of the research and analysis community, the public, and political and military elites who may find themselves in positions of advising the president in a future nuclear crisis.

National Security Analysis Department

Nuclear War as a Global Catastrophic Risk James Scouras

Nuclear war is clearly a global catastrophic risk, but it is not an existential risk as is sometimes carelessly claimed. Unfortunately, the consequence and likelihood components of the risk of nuclear war are both highly uncertain. In particular, for nuclear wars that include targeting of multiple cities, nuclear winter may result in more fatalities across the globe than the better-understood effects of blast, prompt radiation, and fallout. Electromagnetic pulse effects, which could range from minor electrical disturbances to the complete collapse of the electric grid, are similarly highly uncertain. Nuclear war likelihood assessments are largely based on intuition, and they span the spectrum from zero to certainty. Notwithstanding these profound uncertainties, we must manage the risk of nuclear war with the knowledge we have. Benefit-cost analysis and other structured analytic methods applied to evaluate risk mitigation measures must acknowledge that we often do not even know whether many proposed approaches (e.g., reducing nuclear arsenals) will have a net positive or negative effect. Multidisciplinary studies are needed to better understand the consequences and likelihood of nuclear war and the complex relationship between these two components of risk, and to predict both the direction and magnitude of risk mitigation approaches.

First published in Journal of Benefit-Cost Analysis, Volume 10, Issue 2

National Security Analysis Department

Responding to North Korean Nuclear First Use: Minimizing Damage to the Nuclear Taboo → Erin Hahn, James Scouras, Robert Leonhard, and Camille Spencer

The objective of this workshop, funded by the Defense Threat Reduction Agency (DTRA) through the Project on Advanced Systems and Concepts for Countering Weapons of Mass Destruction, was to address issues associated with responding to the first use of nuclear weapons by North Korea, with an emphasis on restoring the taboo against nuclear use. The Johns Hopkins University Applied Physics Laboratory conducted the workshop on April 23–24, 2019, to bring together thought leaders from a variety of fields, including norm theory and practice, nuclear strategy, and Northeast Asia. Workshop participants considered scenarios involving North Korean nuclear first use and developed and analyzed options for responding to that first use. The workshop concluded with discussions of key questions. Through workshop contributions and post-workshop deliberations, we developed recommendations for DTRA, US Strategic Command, the intelligence community, and the Office of the Secretary of Defense. Primary among these recommendations is that all these organizations take restoration of the nuclear taboo seriously as a US objective after an adversary’s nuclear first use and that they conduct appropriate analyses and planning in advance to provide the president with effective nonnuclear retaliatory options that could reduce the severity and duration of damage to the taboo.

National Security Analysis Department

Game Theory and Nuclear Stability in Northeast Asia → Lauren Ice, James Scouras, Kelly Rooker, Robert Leonhard, and David McGarvey

This study assesses game theory’s potential to contribute to understanding the North Korean nuclear crisis. Previous APL work suggests that game theory provides a useful framework for formulating and analyzing multilateral nuclear stability issues; however, it seldom provides unique policy-relevant insights. For it to do so, game theorists must work closely with policy and subject matter experts. Thus, APL invited game theorists and nuclear and regional experts to (1) discuss the strengths and limitations of game theory applied to the North Korean nuclear crisis; (2) address the policy community’s skepticism about game theoretic analyses; and (3) explore mechanisms for collaboration. We found that game theory, when correctly applied, is a rigorous framework for understanding interactions in international conflicts; however, its predictive capability is limited. Misguided expectations have led to skepticism about its utility. While collaboration between these communities might increase understanding of nuclear crisis dynamics, obstacles include resolving motivational and communication issues, regulating expectations, and avoiding improper applications of game theory.

National Security Analysis Department

Nuclear Deterrence as a Complex System → Edward Toton and James Scouras

We establish that the US system for nuclear deterrence is a complex system in the formal sense, that nuclear deterrence must be regarded as a system-level function, and that the consequence of this is that there is the possibility of system-level failures not obviously connected to any component failures. These are emergent properties not predictable from an understanding of each of its components and interactions that may be candidates for Taleb’s black swan events. To understand the potential risk of failure of the US nuclear deterrence system as it exists in the United States and in the larger context of multiple state actors, it is necessary to understand the potential interactions of components and command authority. For the analyst, this means constructing models that attempt to capture the non-linearities of interactions, the existence of which is increasingly apparent.

National Security Analysis Department

Nuclear Crisis Outcomes: Winning, Uncertainty, and the Nuclear Balance → Kelly Rooker and James Scouras

The binomial distribution is widely used across many different disciplines. In cases where data can be represented with a binomial distribution, an estimate for the binomial distribution parameter (for the probability of success) is often produced. However, uncertainty surrounding this estimate is only sometimes reported, partly due to the opacity of the various methods available for determining this uncertainty. Failing to appropriately analyze uncertainty can lead to erroneous, or at least incomplete, conclusions. Here, we explore both Bayesian and frequentist methods for quantifying uncertainty in the binomial distribution parameter, and discuss each method's various advantages and limitations. Our work is motivated by nuclear crisis outcome data. While nuclear crises have been studied to determine the likelihood of the nuclear-superior, compared to the nuclear-inferior, state winning in a crisis, there is great uncertainty in these estimates for the probability of winning. We demonstrate methods that appropriately quantify such uncertainty and use nuclear crisis outcome data to illustrate applications of the methods we present, as well as to demonstrate insights that can be provided by explicitly analyzing uncertainty.

National Security Analysis Department

Nonstrategic Nuclear Forces: Moving Beyond the 2018 Nuclear Posture Review → Dennis Evans, Barry Hannah, and Jonathan Schwalbe

The United States has a nuclear triad consisting of ballistic missile submarines, land-based intercontinental ballistic missiles, B-52 bombers, and B-2 bombers. At one time, it also had thousands of nonstrategic nuclear weapons (NSNWs) that were not covered by any treaties until the Intermediate-Range Nuclear Forces (INF) Treaty banned several types of US and Soviet weapons in 1987. Today, US NSNWs are limited to unguided bombs on non-stealthy short-range fighters at several bases in NATO countries. Russia, by contrast, has a much larger inventory of NSNWs and is modernizing them. China also has NSNWs, and North Korea either already poses, or soon will pose, a nuclear threat in the western Pacific. This growing asymmetry in NSNWs may pose a threat to NATO and to US allies in the western Pacific. The United States needs to devote more attention to this situation, considering improvements to its NSNWs along with other measures that might help mitigate these asymmetries, such as improved defenses against small nuclear attacks. The United States also needs to consider options for modifications to the INF Treaty in lieu of complete withdrawal.

National Security Analysis Department

A Preface to Strategy: The Foundations of American National Security → Richard Danzig, John Allen, Phil DePoy, Lisa Disbrow, James Gosler, Avril Haines, Samuel Locklear III, James Miller, James Stavridis, Paul Stockton, and Robert Work

During World War II, international threats and national goals were clear. That clarity continued, albeit to a lesser degree, throughout the Cold War and into the new century, with the United States as the world’s preeminent superpower and leader in defense, technology, and economic might. Today’s world is a different place, and the need for a clear picture of it is critical. This paper helps to crystallize that picture by first identifying premises that served processes, institutions, and strategies from World War II through the Cold War, seeking to comprehend our inherited predispositions as predicate for rethinking them. It then identifies changes that undermine these premises. To forge new premises, the authors specify foundational American strengths that must be protected and expanded amid and despite these changes. Finally, the authors suggest premises for a new age of strategic thought. This paper does not recommend a new national security strategy. Instead, it serves as a necessary preface to such a strategy by articulating how our national strengths and weaknesses must be understood as foundations for American security and by showing how the premises that have guided us from World War II to the present must be modified for the future.

National Security Analysis Department

Resilience for Grid Security Emergencies: Opportunities for Industry–Government Collaboration → Paul N. Stockton

Power companies and US government agencies have an unprecedented opportunity to strengthen preparedness for cyber and physical attacks on the electric grid. In December 2015, Congress authorized the secretary of energy to issue emergency orders to grid operators to protect and restore grid reliability in grid security emergencies. These orders could help sustain electric service to military bases and other vital facilities. However, unless the electric industry and the Department of Energy partner to develop emergency orders before attacks occur, they will miss significant opportunities to help deter and (if necessary) defeat such attacks.

This report examines design requirements for emergency orders. It analyzes decisions criteria that the president might use to determine that a grid security emergency exists, which is a prerequisite for issuing emergency orders. The report assesses possible orders for three phases of grid security emergencies: when attacks are imminent; when attacks are under way; and when utilities begin to restore power, potentially while facing follow-on attacks. It identifies recommendations to strengthen emergency communications plans and capabilities. It concludes by identifying areas for further analysis, including measures to bolster cross-sector resilience between the grid and the other infrastructure systems and sectors on which it depends.

National Security Analysis Department

Quantifying Improbability: An Analysis of the Lloyd’s of London Business Blackout Cyber Attack Scenario → Susan Lee, Michael Moskowitz, and Jane Pinelis

Scenarios that describe cyber attacks on the electric grid consistently predict significant disruptions to the economy and citizens’ quality of life. Most offer anecdotal support for the grid’s vulnerability to such an attack and assume the existence of an adversary with the means and intent to launch the attack. An estimate of risk, however, also requires knowledge of the probability that an attack of the required caliber can be successfully executed. Quantifying the probability of success for a large-scale cyber attack is hard because of the lack of precedent and the changing nature of threats and vulnerabilities. This report uses the grid cyber attack scenario outlined in the Lloyd’s of London and the University of Cambridge Centre for Risk Studies 2015 report, Business Blackout, to demonstrate how a probabilistic assessment could be used to quantify the likelihood that the scenario could occur. The analysis is subject to the limitations inherent in any probabilistic risk assessment; however, it serves to highlight some interesting phenomena that deserve further investigation, such as the importance of some individual power plants in influencing the adversary’s probability of success. In addition, it describes feasible data collection that would materially increase the validity of such an analysis.

National Security Analysis Department

Parametric Cost and Schedule Modeling for Early Technology Development → Chuck Alexander

There is a need in the scientific, technology, and financial communities for economic forecast models that improve the ability to estimate new or immature technology developments. Engineering design or conceptual technical requirements with which to drive parametric estimates or translate analogous system costs are often unavailable in early life-cycle stages of technology development. The limited availability of comparable systems, design or performance parameters, and other objective bases makes it challenging to produce even rough-order-of-magnitude cost and schedule models. Often compounding the limited availability of information is the proprietary or protected nature of technology research and development efforts and related intellectual property. Consequently, executives, program managers, budget analysts, and other decision-makers must often rely on historical information from related yet often very dissimilar systems or the subjective opinion or “best guess” of subject-matter experts. This paper first investigates available industry modeling concepts, frameworks, models, and tools. A representative project data set is identified and selected for cost and schedule modeling, leveraging macro-parameters generally known or available in early technology development stages. Several model forms are then created and evaluated based on key performance criteria.

National Security Analysis Department

Sony’s Nightmare Before Christmas: The 2014 North Korean Cyber Attack on Sony and Lessons for US Government Actions in Cyberspace → Antonio DeSimone and Nicholas Horton

The cyber attack on Sony Pictures Entertainment in late 2014 began as a public embarrassment for an American company and ultimately led to a highly visible response from national leaders after the purported criminals threatened 9/11-style attacks on movie theaters showing the film. The cyber attack was triggered by the imminent release of The Interview, a comedy by Sony Pictures Entertainment in which an American talk show host and his producer are recruited by the Central Intelligence Agency to travel to North Korea and assassinate Kim Jong-un, the country's supreme leader. The cyber attack was discussed everywhere: from supermarket tabloids, delighting in gossip-rich leaked emails, to official statements by leaders in the US government, including President Obama.

The events surrounding the attack and attribution provide insight into the effects of government and private-sector actions on the perception of a cyber event among the public, the effect of attribution on the behavior of the attackers, and possible motives for North Korea's high-profile cyber actions. The incident also illuminates the role of multi-domain deterrence to respond to attacks in the cyber domain.

National Security Analysis Department

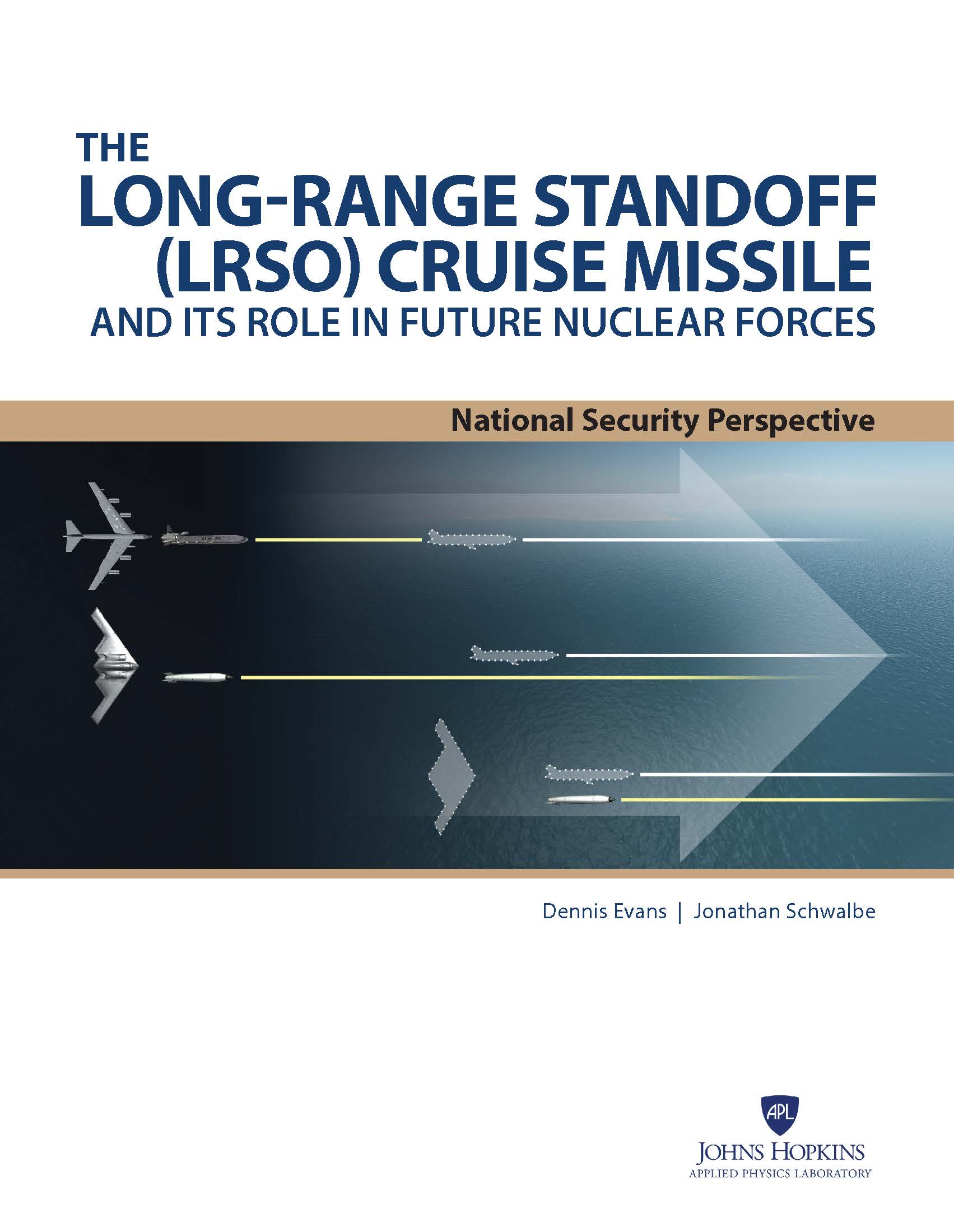

The Long-Range Standoff (LRSO) Cruise Missile and Its Role in Future Nuclear Forces → Dennis Evans and Jonathan Schwalbe

The United States has a nuclear triad that consists of ballistic missile submarines (SSBNs), land-based intercontinental ballistic missiles (ICBMs), B-52 bombers, and B-2 bombers. The non-stealthy B-52 relies entirely on the AGM-86 Air-Launched Cruise Missile (ALCM) in the nuclear role, whereas the B-2 penetrates enemy airspace to drop unguided bombs. The current SSBNs, ICBMs, ALCMs, and B61 bombs will all reach end of life between the early 2020s (for the B61 bomb) and the early 2040s, whereas the B-52 should last until at least 2045 and the B-2 should last until at least 2050. Programs are well under way for a new SSBN, a new bomber, and the B61-12 guided bomb, whereas programs have just started for a new ICBM and for the Long-Range Standoff (LRSO) cruise missile that is planned to replace the AGM-86. Among these programs, the LRSO is the most controversial and (probably) the one at most risk of cancellation. Analyses presented here suggest that LRSO is critical to the future of the triad and should not be terminated or delayed.

National Security Analysis Department

Nonstrategic Nuclear Weapons at an Inflection Point → Michael Frankel, James Scouras, and George Ullrich

The world has changed greatly since the last Nuclear Posture Review (NPR) was formulated only some seven years ago, and US nuclear policy must be responsive to these changes. In particular, the 2010 NPR assessed that Russia and the United States are “no longer adversaries” and their “prospects for military confrontation have declined dramatically.” This assessment has been directly confronted in the intervening years by Russia’s steady stream of nuclear saber rattling, its naked aggression in Ukraine, and its palpably bellicose willingness to project its military might beyond Europe. Moreover, large asymmetries in nonstrategic nuclear capabilities, coupled with Russia’s escalate-to-deescalate doctrine and earlier abandonment of its commitment to a no-first-use nuclear posture, suggest that Russia views nuclear weapons as useful instruments of intimidation and warfighting. We argue that Russian first-use of nuclear weapons in Europe should be addressed as a high priority nuclear threat in the trump administration’s NPR. We address the roles of allied nonstrategic nuclear weapons in Europe; the challenges posed by asymmetries in numbers, systems, and doctrine; and NATO’s potential response options. Looking forward, we anticipate key nuclear policy decisions the trump administration must face, and suggest that the issue of nonstrategic nuclear weapons, heretofore treated as a nearly irrelevant epicycle orbiting the greater strategic nuclear issues at play, can no longer be neglected.

National Security Analysis Department

Future Fleet Project. What Can We Afford? → Mark Lewellyn, Chris Wright, Rodney Yerger, and Duy Nhan Bui

Many factors affect the size and makeup of the Navy’s fleet. Not the least of these is the amount of money available to recapitalize the ships and submarines that comprise the fleet. Recent assessments by the Congressional Budget Office show that the funds needed to support the Navy’s current thirty-year shipbuilding plan will need to increase by about a third over the average funds used by the Navy during the past thirty years. This paper explores fiscally constrained modernization strategies for the Navy’s future fleet designed to achieve key priorities, such as recapitalization of the sea-based nuclear deterrent, while minimizing reductions to other components of the plan.

National Security Analysis Department

Superstorm Sandy: Implications for Designing a Post-Cyber Attack Power Restoration System → Paul Stockton

Many factors affect the size and makeup of the Navy’s fleet. Not the least of these is the amount of money available to recapitalize the ships and submarines that comprise the fleet. Recent assessments by the Congressional Budget Office show that the funds needed to support the Navy’s current thirty-year shipbuilding plan will need to increase by about a third over the average funds used by the Navy during the past thirty years. This paper explores fiscally constrained modernization strategies for the Navy’s future fleet designed to achieve key priorities, such as recapitalization of the sea-based nuclear deterrent, while minimizing reductions to other components of the plan.

National Security Analysis Department

“Little Green Men”: A Primer on Modern Russian Unconventional Warfare, Ukraine 2013–2014 → Robert R. Leonhard, Stephen P. Phillips, and the Assessing Revolutionary and Insurgent Strategies (ARIS) Team

This document is intended as a primer—a brief, informative treatment—concerning the ongoing conflict in Ukraine. It is an unclassified expansion of an earlier classified version that drew from numerous classified and unclassified sources, including key US Department of State diplomatic cables. For this version, the authors drew from open source articles, journals, and books. Because the primer examines a very recent conflict, it does not reflect a comprehensive historiography, nor does it achieve in-depth analysis. Instead, it is intended to acquaint the reader with the essential background to and course of the Russian intervention in Ukraine from the onset of the crisis in late 2013 through the end of 2014.

National Security Analysis Department

The Uncertain Consequences of Nuclear Weapons Use → Michael Frankel, James Scouras, and George Ullrich

The considerable body of knowledge on the consequences of nuclear weapons use underlies all operational and policy decisions related to US nuclear planning—but very large uncertainties still remain. As a result, the physical consequences of a nuclear conflict tend to have been underestimated, and a full-spectrum all-effects assessment is not within anyone’s grasp now or in the foreseeable future. This work outlines the current state of our knowledge base and presents recommendations for strengthening it.

National Security Analysis Department

The Cyber Dimensions of the Syrian Civil War → Edwin Grohe

This perspective describes cyber operations known to have been used during the Syrian civil war from January 2011 until December 2013. The cyber operations of pro-regime forces, anti-regime forces, and nations providing support, as well as US involvement and its effects on these cyber operations, are discussed as a basis for drawing observations and implications for future conflicts.

National Security Analysis Department

Assessing the Risk of Catastrophic Cyber Attack → Michael Frankel, James Scouras, and Antonio De Simone

Reflecting on the similarities between cyber and electromagnetic pulse (EMP) attacks, the authors of this Research Note explain how the approach used by the EMP Commission to assess the likelihood and consequences of EMP attacks could provide useful lessons for analysts grappling with the analogous assessment of cyber attacks.

National Security Analysis Department

A Conventional Flexible Response Strategy for the Western Pacific → Brendan Cooley and James Scouras

Uncertainties about China’s rise and the nature of its future relationship with the United States create the need for a grand strategy that simultaneously hedges against alternative futures while making more worrisome futures less probable. A conventional flexible response strategy could provide adequate deterrent capability at the high end of the spectrum of conflict and be better able to manage confrontations at the low end, while nudging China toward integration into the current global order.

National Security Analysis Department

Cross-Domain Deterrence in US–China Strategy → James Scouras, Edward Smyth, and Thomas Mahnken

This report documents the Cross-Domain Deterrence Workshop conducted at APL on June 26, 2013. The overarching objectives of the workshop were to identify (1) the challenges in deterring actions in one domain of warfare (e.g., cyber, space, nuclear, conventional, etc.) by posing retaliatory threats in another domain and (2) research needs and promising research approaches for advancing our understanding of these challenges and forging effective cross-domain policies and strategies. The workshop focused on China, which arguably poses the broadest array of cross-domain deterrence challenges to the United States.

National Security Analysis Department

The New Triad: Diffusion, Illusion, and Confusion in the Nuclear Mission → Michael J. Frankel, James Scouras, and George W. Ullrich

The New Triad, the Department of War's conceptual structure for strategic capabilities, is an impediment to clear thinking, communication, and consensus regarding nuclear issues. Its fatal flaw is the commingling of nuclear and conventional weapons, which lowers the nuclear threshold and undermines deterrence and stability. The vertices of the New Triad appear to represent little more than institutional interests intent on staking out equity, with the primary purpose of promoting the acquisition of controversial capabilities—missile defenses, conventional global strike, new nuclear warheads—rather than comprising the well thought out complementary components of an integrated system. Thus it lacks the intellectual coherence necessary to communicate nuclear policy to the public and to Congress. We recommend that the new Administration scrap the New Triad, divorce nuclear and conventional deterrence, and reserve nuclear weapons for deterring extreme threats and responding to extreme attacks from nuclear states for which no lesser military capabilities suffice.

Workshops and Symposia

Third National Workshop on Marine eDNA

APL and the Smithsonian Institution hosted the third National Workshop on Marine eDNA, a biennial workshop that brings together researchers, practitioners, and policymakers to discuss eDNA technologies and accelerate the incorporation of eDNA science into environmental management applications.

Going Viral: Bioeconomy Defense

APL and the Bioeconomy Information Sharing and Analysis Center hosted a tabletop exercise for experts from the public and private sectors to identify vulnerabilities, recommend mitigations, and establish greater understanding of the threats unique to the bioeconomy. This effort revealed four key areas of action to ensure a safe and secure bioeconomy: trust, awareness, responsibility, and preparedness.

Event Summary from Inaugural National Health Symposium

APL has published the event summary for the inaugural National Health Symposium, which brought together more than 160 experts from government, academia and industry to discuss ways that advances in research and development can translate into better delivery of health care.

Unrestricted Warfare Symposium 2009 Ronald R. Luman (National Security Analysis Department)

Proceedings on Combating the Unrestricted Warfare Threat: Terrorism, Resources, Economics, and Cyberspace

Unrestricted Warfare Symposium 2008 Ronald R. Luman (National Security Analysis Department)

Proceedings on Combating the Unrestricted Warfare Threat: Integrating Strategy, Analysis, and Technology

Unrestricted Warfare Symposium 2007 Ronald R. Luman (National Security Analysis Department)

Proceedings on Combating the Unrestricted Warfare Threat: Integrating Strategy, Analysis, and Technology

Unrestricted Warfare Symposium 2006 Ronald R. Luman (National Security Analysis Department)

Proceedings on Strategy, Analysis, and Technology

Lab-wide Publications

Annual Reports →

The Annual Report highlights APL’s technical expertise and critical contributions to critical challenges.

Technical Digest →

The Johns Hopkins APL Technical Digest is an unclassified technical journal published quarterly by APL. The objective of the publication is to communicate the work performed at the Laboratory to its sponsors and to the scientific and engineering communities, defense establishment, academia, and industry.