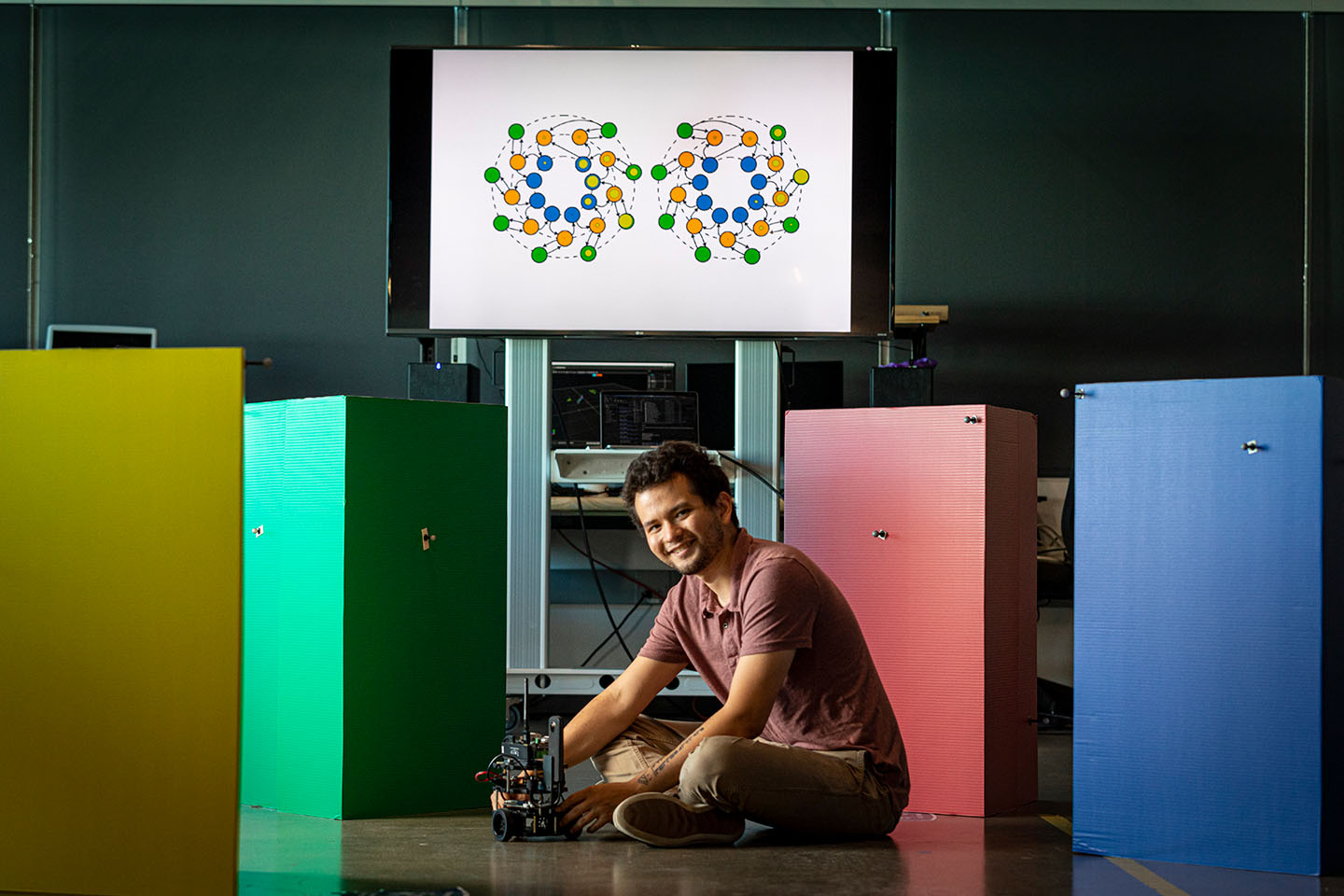

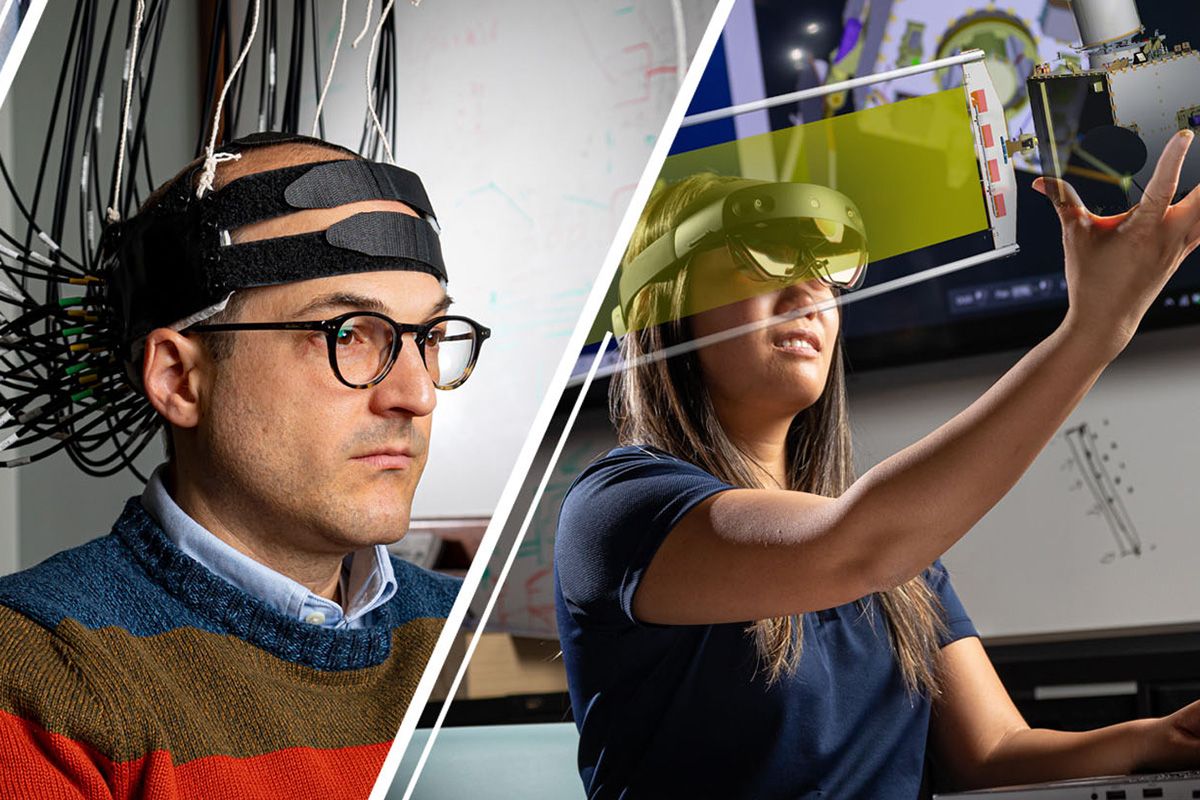

Our Contribution

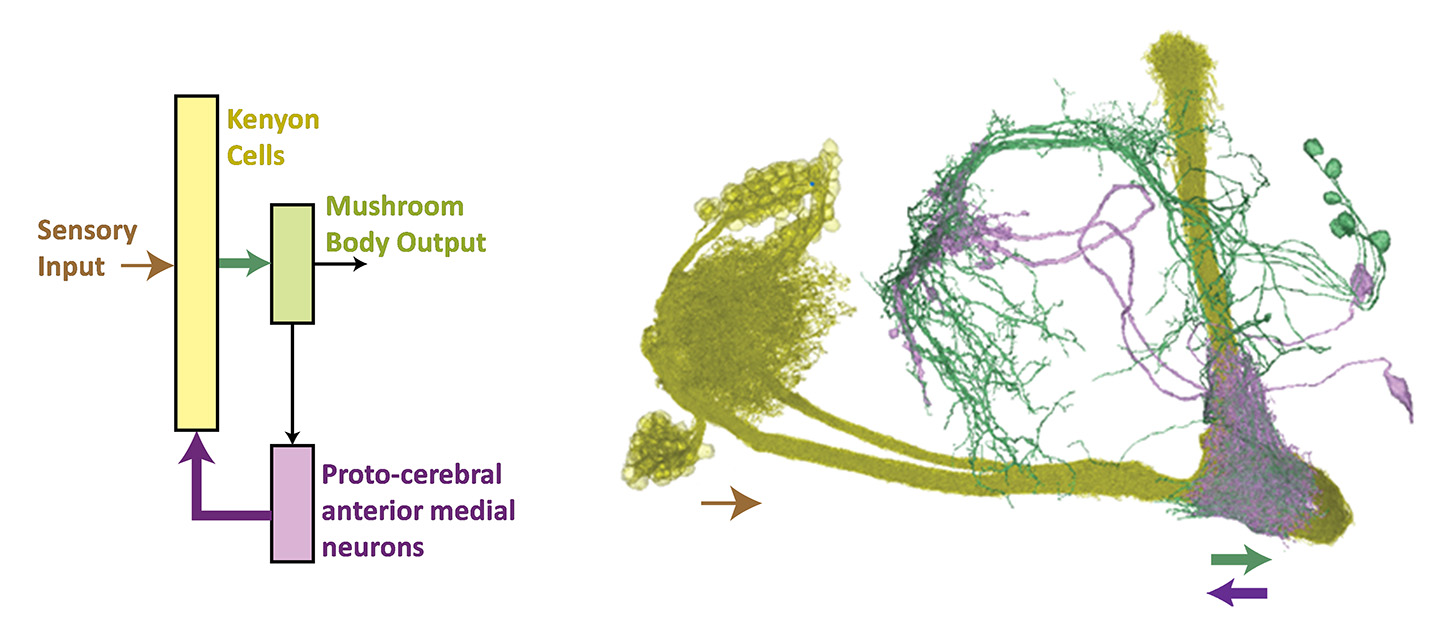

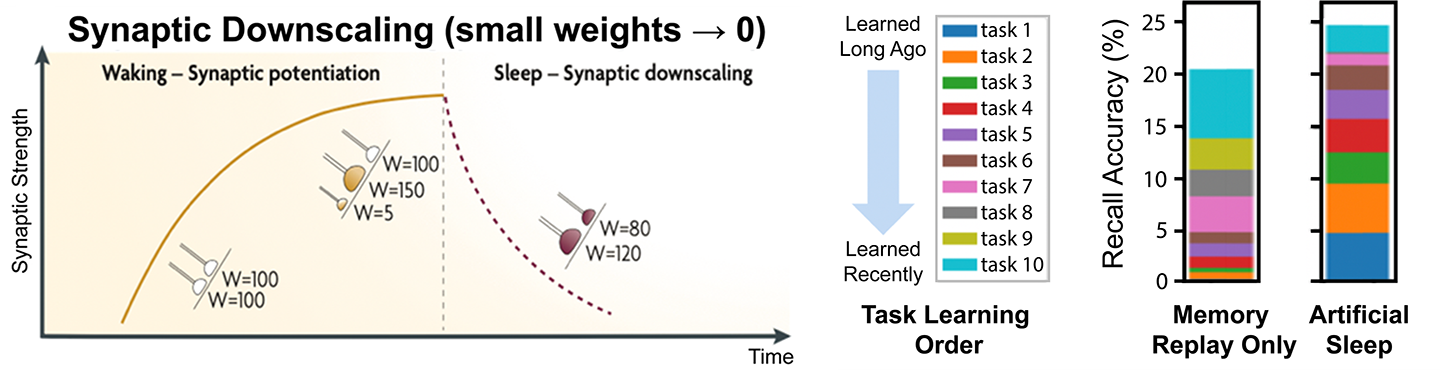

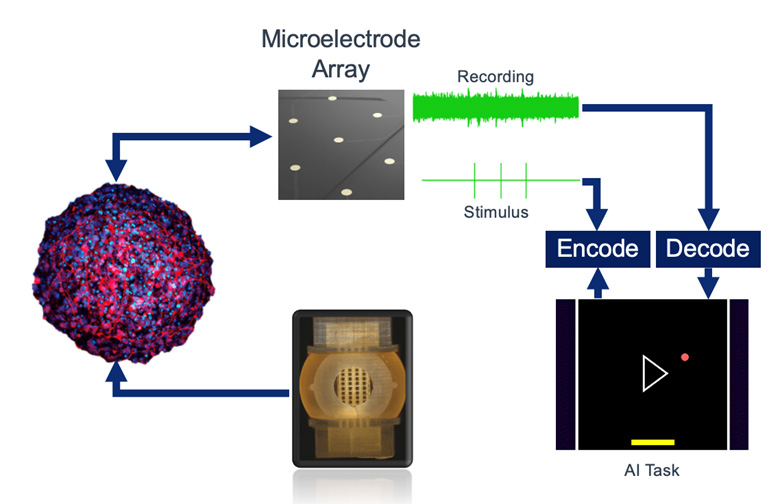

Researchers in APL’s Intelligent Systems Center (ISC) are extracting and translating design principles of neural connectivity and function from multiple species to create the next generation of robust, efficient intelligent systems operating in the real world. Jump to a section to learn more about this research.Explore Our Work