Press Release

APL Shaping an Intelligent Approach to Disaster Response and Relief

In most cases, the preparation for and response to a natural disaster don’t match the damage caused and the devastation left behind. But a team of engineers and researchers in the Asymmetric Operations Sector (AOS) of the Johns Hopkins Applied Physics Laboratory (APL) is using its expertise in artificial intelligence (AI) to change that.

Last year, during Hurricane Florence, APL answered FEMA’s call for help to anticipate and detect flooded sections of North Carolina and surrounding coastal areas. Because the Lab already had applicable software — coupled with decades of AI experience — the team turned its results around quickly. Within days, the Lab began providing FEMA daily satellite and aerial images, processed through multiple deep learning algorithms trained to produce computer vision segmentation of water in images (called water-segmentation masks) and detect communication towers, roads, bridges, vegetation, buildings and other items of interest.

The solution leveraged the Lab’s proven experience and growing work in AI — in this case, an algorithm to detect and classify objects in overhead imagery. FEMA used the processed imagery to quickly map and assess, and then monitor, the extent of flooding.

“FEMA was pleased with the images,” said Joshua Broadwater, a remote sensing researcher and supervisor of AOS’ Imaging Systems Group. “This was a fantastic opportunity for the government to learn more about APL’s capabilities in disaster relief and the potential application of this technology for a wider range of situations.”

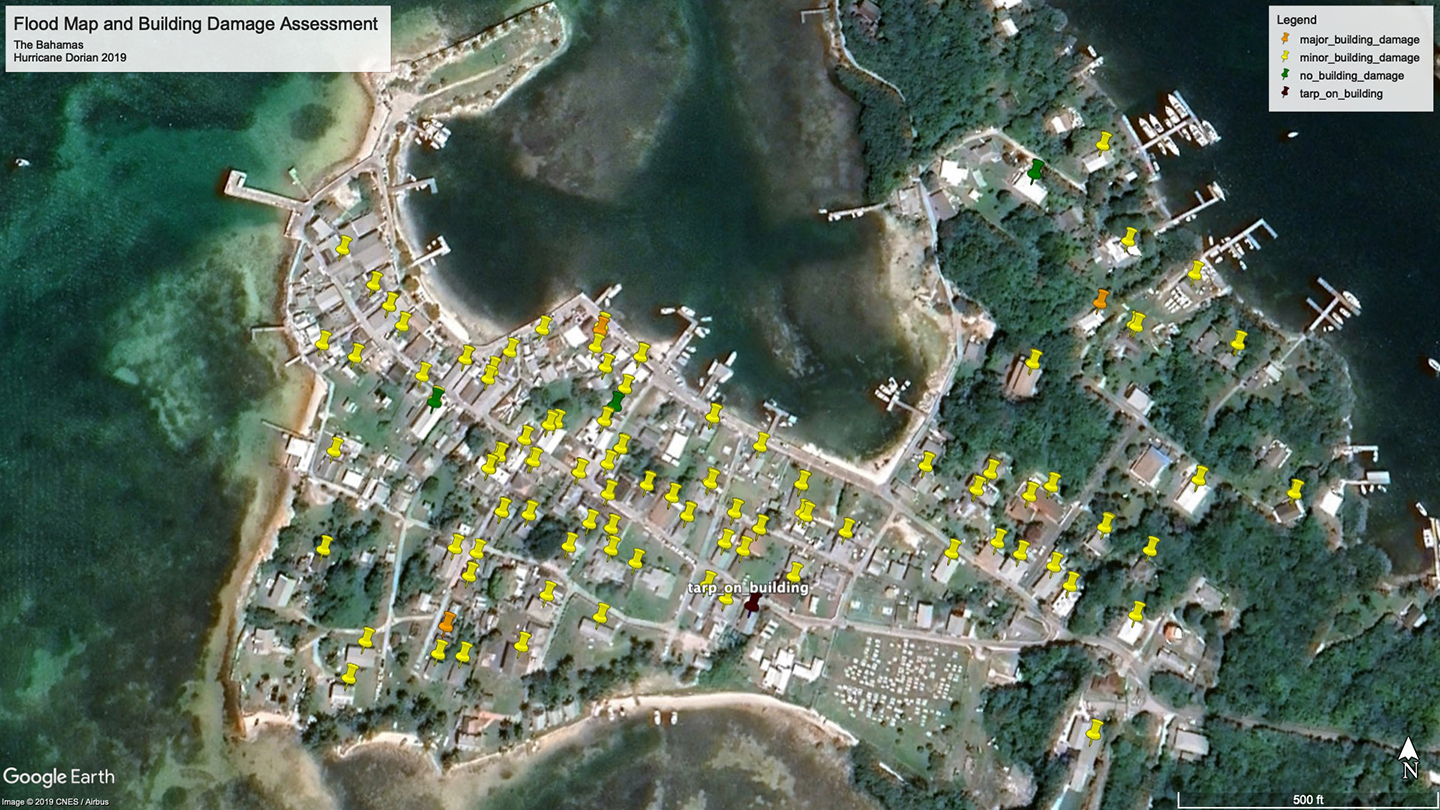

Based on the Florence experience, the Defense Department’s Joint Artificial Intelligence Center (JAIC) tasked the Lab to take its technology to the next level. APL researchers are creating a machine-learning capability to collect and process overhead imagery into three main types of analytics: flood segmentation (locating and marking areas of flooding), road analysis (identifying blocked and unblocked roads), and building damage assessment, which classifies a building by four types of damage according to the FEMA protocol (no damage, minor damage, major damage and completely destroyed).